OpenAI promised ads were a last resort. Twenty months later, ChatGPT has ads. Why AI advertising is different from everything before it.

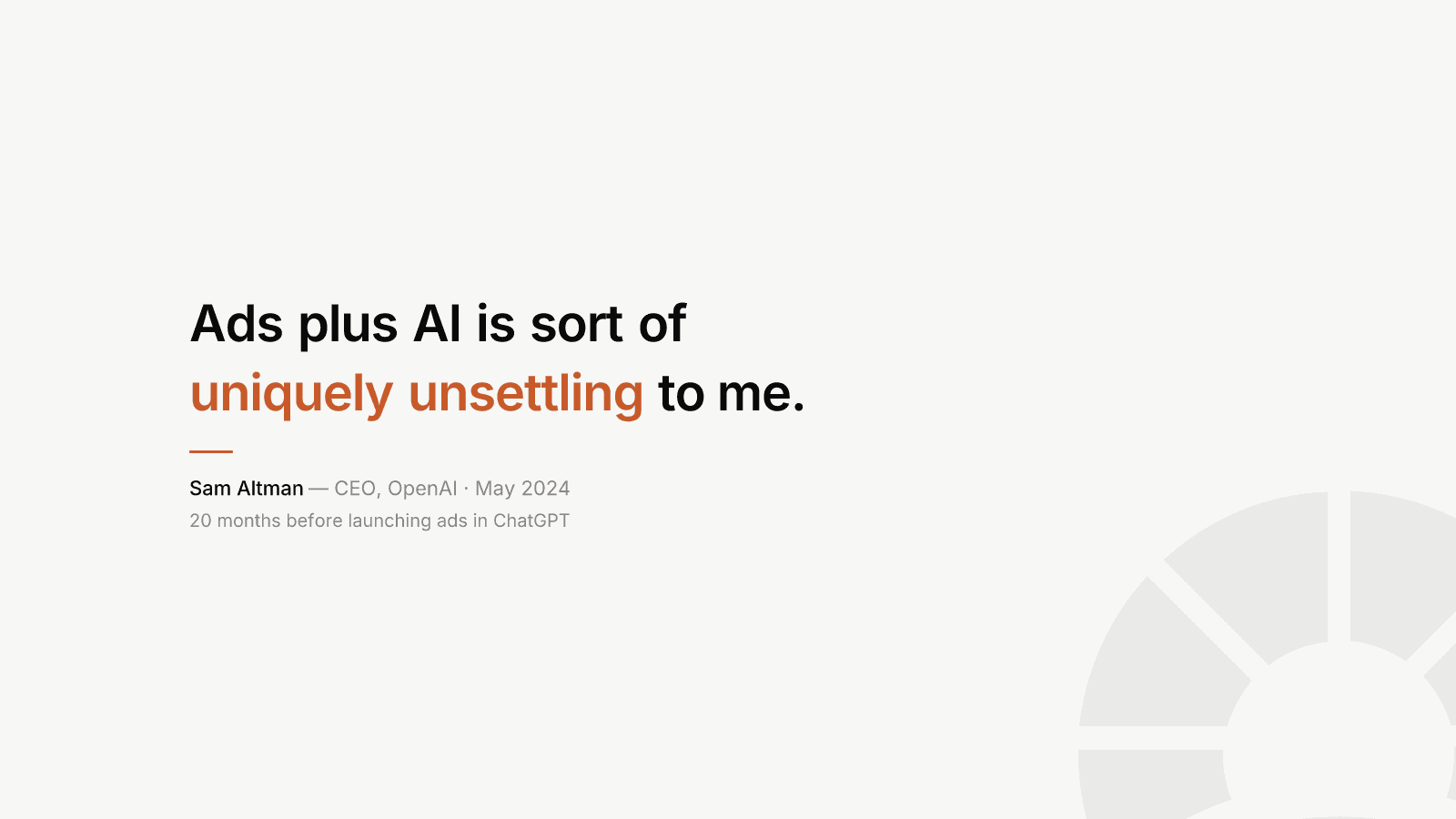

In May 2024, Sam Altman stood in front of a Harvard audience and said: "Ads plus AI is sort of uniquely unsettling to me. I kind of think of ads as a last resort for us for a business model."

He went further: ads "fundamentally misalign a user's incentives with the company providing the service."

Twenty months later, on February 9, 2026, OpenAI began rolling out ads in ChatGPT.

The "last resort" arrived remarkably fast.

Let me be clear about something: I understand why OpenAI did this. The company reportedly lost close to $8 billion in 2025. Running large language models is extraordinarily expensive. Only about 5% of ChatGPT's 800 million weekly users pay for a subscription. That's 760 million people consuming compute resources for free.

This is a real problem. I'm not pretending otherwise.

But understanding the financial pressure doesn't mean accepting that advertising was the right answer. Because this wasn't a company with no alternatives. OpenAI already had a working subscription model generating billions in revenue. They could have explored usage-based pricing, tiered compute access, enterprise partnerships, or simply accepted that a free tier should have usage limits rather than ad subsidies.

They chose the path that every tech company before them has chosen. And that's exactly the problem.

We've watched this movie before. The advertising business model has a specific gravity that pulls every company toward the same outcome.

It starts with the promise that ads won't affect the product. That they'll be clearly labeled, unobtrusive, and never influence what users see.

Then the ad revenue becomes a meaningful part of the business. Internal teams are built around it. Revenue targets are set. Suddenly, the product roadmap has two customers: the person using it and the advertiser paying for access to that person.

This is not speculation. OpenAI's own internal forecasts project $1 billion in "free user monetization" in 2026, scaling to $25 billion by 2029. At that level, advertising isn't a supplement. It becomes a structural dependency.

And once a company depends on ad revenue at scale, every product decision gets filtered through a second question: how does this affect ad performance?

Here's what makes AI advertising fundamentally different from what came before.

When Google shows you ads, it knows what you searched for. When Instagram shows you ads, it knows what you liked and who you follow.

When ChatGPT shows you ads, it knows what you're thinking about.

People share things with AI assistants they wouldn't type into a search engine. Career doubts. Health anxieties. Financial insecurities. Relationship problems. Business strategies they haven't shared with their team yet.

OpenAI's own documentation confirms that ad targeting uses "the topic of your conversation, your past chats, and past interactions with ads." If memory is enabled, the system can reference your stored memories when selecting which ad to show you.

This isn't a banner ad on a website. This is an advertising system built on top of your most private conversations.

Altman himself, when announcing the rollout, pointed to Instagram's ad targeting as the model he admires, noting he's "found stuff I like that I otherwise never would have." But Instagram's targeting works precisely because of the massive personal data it collects. If ChatGPT's ads are going to be that effective, what does that tell you about how much conversational data is being leveraged?

What frustrates me most about this isn't the ads themselves. It's the missed opportunity.

For the first time, we had a product category where people were genuinely willing to pay. Not grudgingly, not after being pushed through a funnel, but proactively. People saw immediate, tangible value in AI assistants and reached for their wallets.

This was the moment to break the cycle. To prove that software could serve the person using it, not the company buying their attention. To build a business model where the product gets better because users demand it, not because advertisers need more engagement.

AI assistants had a real chance to be the thing that finally ended the era of "if you're not paying, you're the product." Instead, OpenAI looked at that opportunity and decided to make 760 million people into inventory.

I've spent 16 years building marketing functions at SaaS companies. I've run paid acquisition. I've managed ad budgets. I understand the mechanics of advertising better than most people writing opinion pieces about it.

And that's exactly why I find this troubling. Because I know what happens inside companies once ad revenue enters the equation. The incentives shift gradually, then suddenly. The user experience team starts sharing meetings with the monetization team. Product decisions that would never survive a "does this help the user?" test get approved because they improve CPMs.

OpenAI says ads won't influence ChatGPT's answers. Maybe that's true today. But they also said ads were a last resort. Promises made under no financial pressure tend to dissolve when the pressure arrives.

The question worth asking isn't whether ChatGPT's current ads are harmful. They're banner ads for hot sauce at the bottom of a response. The question is what this decision reveals about the trajectory.

OpenAI has told us exactly where this is going. Their blog post promises to "evolve our advertising program to support additional formats, objectives and buying models and build new ways for businesses to interact with consumers in ChatGPT."

That's not a company testing a cautious experiment. That's a company building an ad platform.

Sam Altman was right in May 2024. Ads plus AI is uniquely unsettling. He just wasn't willing to live by his own conviction when the bill came due.